NVH trends & practical tips in Corona times

25 June 2020

About a year ago, we wrote about the trends in the automotive industry and their impact on NVH. As cliché as it is, the COVID-19 outbreak brings some drastic changes to our earlier view. It’s extremely relevant nevertheless, to review the potential impact this has on the way automotive NVH engineering is done.

Our previous article stated 5 major trends in automotive and their impact on NVH: powertrain electrification, lightweight materials, platform sharing, increasing numbers of different vehicle models and the modular engineering approach. Finally, the impact of autonomous driving on NVH is less relevant for now, but will eventually have a massive impact on the way we use cars (it’s worth noticing that autonomous driving is expected to increase the importance of NVH). We are in the midst of these ‘trends’, which put tremendous pressure on the engineering departments that actually have to make this happen. Not only need engineers to familiarize themselves in working with new materials and powertrains, they have to do so for 2 to 4 times more vehicle models when compared to earlier this decade. For NVH departments the challenge is even more difficult, as NVH is traditionally evaluated best at fully integrated vehicles (that is, physical prototypes).

Of course the above trends did not change overnight. Rather, the COVID-19 outbreak throws in some additional challenges to cope with. With VIBES, we work with both OEMs and suppliers all over the world and see a number of new challenges for NVH engineers.

Trends & Challenges

- Travel restrictions make coordination between OEMs and suppliers increasingly difficult. Engineers from OEMs are not allowed to visit supplier-facilities to perform necessary checks and measurements.

- Due to supply chain disruptions not all components are available at the (just-in-) time when they are required. Therefore, fewer full-vehicle prototypes are available, which is a challenge given that the full-vehicle evaluation is the typical way to go for proper NVH results. To overcome this, engineering departments can focus their efforts on computer simulations or ‘digital twins’, which do not require the presence of full physical prototypes. However, using solely simulated data for NVH predictions has inherent limitations. Creating proper computer models is extremely hard and can take months, and the level of detailed required to eliminate NVH issues (especially at high frequencies) is in most cases impossible to realize.

- Uncertainty in manufacturing, the global economic situation and the cancelation of auto shows made many OEMs decide to postpone the launch and start of production (SOP) dates of new vehicles while advancing others. This requires a high level of agility from R&D departments to cope with all these changes, while they themselves are still figuring out how to best do their regular jobs.

- R&D budgets are either frozen or cut in favor of liquidity, partnerships are put on hold and lay-offs hit engineering departments.

- Work is postponed. Government regulations for short-time work (German: Kurzarbeit) require employees to not perform work, otherwise companies will lose the government subsidy. Work-from-home policies limit the ability to do physical testing and limit the usage of dedicated tools present at the company facilities.

- Prototype and track testing is limited by travel restrictions and social distancing policies. Only a few people are allowed to be in a single vehicle at the same time. For some employees, this scenario brings some nice benefits.

As you notice, it’s a lot of change to cope with, and as VIBES we are dealing with a fair share of these topics in our engineering services projects. However, we don’t only see the difficulties, but we also see companies coping with these issues rather well. So, what do we see and what can we learn from these approaches?

Practical Tips

Flexibility and transparency in the engineering process are key. In practice, this boils down to 3 practical steps.

1 – Be a freak about documentation

Many engineers suffer from a special variety of the ‘Not Invented Here’ syndrome, where models, predictions and measurements that did not involve the engineer herself are not fully trusted – and therefore not used. Engineers like to have full control over the complete process, and in the end only fully trust analysis results based on their own data and their own analysis scripts. Now that measurements need to be outsourced to whoever can be present to physically perform the measurement (at a supplier, test-track, etc.), there is no other option than to work with data from other people. There are two key aspects to a successful implementation of this: clear documentation and objective data quality indicators.

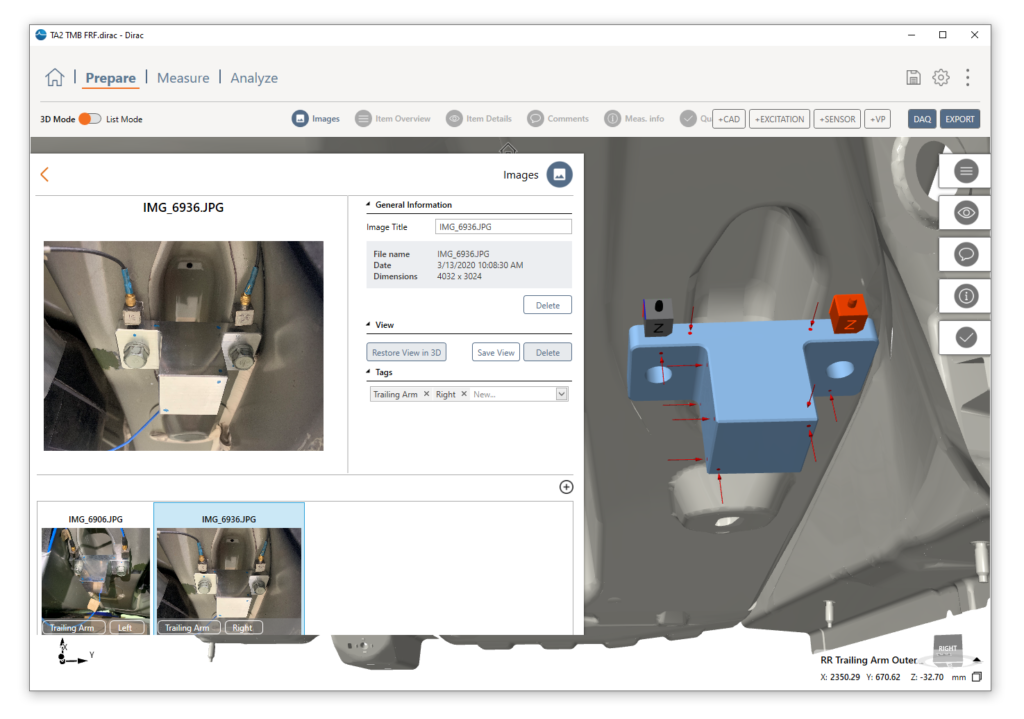

The handover of data to a colleague needs to be accompanied by clear documentation of all performed steps and observed anomalies. For measurements, our most successful clients switched to using CAD environments or the DIRAC-PREPARE module rather than old-fashioned Excel lists to get the transparency of the process up to the required level. They add structured photos and notes of the actual situation to ensure that any differences between ‘practice and theory’ can be checked also by people not present at the measurement. ‘Visual documentation’ simply prevents misunderstanding, and currently allows VIBES to prepare measurements in our offices and have partners perform the actual measurements all over the globe.

The DIRAC PREPARE module helps communicating test setups between involved parties around the globe. Photos can be added later to keep track of any changes made during the measurement campaign.

2 – Use objective quality indicators

Especially for measured data, objective and independent quality indicators are crucial. The ultimate check for the whole process is to evaluate the end result with an independent cross-check. When differences are observed, the documentation of step 1 is crucial to find the cause. In practice VIBES uses a few approaches, depending on the project at hand:

- The virtual point reciprocity check is the perfect example: two independent sets of virtual point responses (not just sensors!) and corresponding forces should give the same result.

- When available, we compare measured results with simulated data from finite element models and try to truly understand any differences that appear (so: never simply blame ‘the lack of damping’ or ‘the sensor mass’ to shuffle potential mistakes under the carpet)

- For a blocked-force characterization we use a similar approach: for every analysis we have at least a few independent validation measurements (according to ISO 20270) to verify the analysis procedure.

3 – Have a back-up strategy (the modular approach)

Ideally, OEMs make accurate NVH predictions at the very start of a new development project and continuously optimize designs, knowing the impact on NVH. The reality is different though: all models are wrong, and only when the first prototype is available you know how far off you are. If the availability of these prototypes is also unsure, things become increasingly challenging.

Ideally, there’s an alternative, or ‘back-up plan’ for each component: if simulations and measurements are seamlessly combined, we decrease the dependency on either full simulations or full prototype measurements: we mix ‘n match with the information we have, while continuously updating our predictions once more reliable information becomes available. At the basis are two things:

- a modular approach to combine different modeling strategies (for example using virtual points);

- “the Cloud” for global availability, access and version control of component and vehicle models.

Is a subsystem available but the full-vehicle not yet? Measure it & combine the (more reliable!) data with simulations of the rest of the vehicle! Have a delay with a specific component? Use a simulated model instead! Who knew ‘agile’ would be relevant for NVH departments ?

In case you want to know more about the VIBES approach to NVH engineering, don’t hesitate to contact us! It’s literally our job to help you work this out.